Z. Liu, Z. Li, J. Wang, and Y. He

in Proceedings of the Thirty-Eighth AAAI Conference on Artificial Intelligence (AAAI'24)

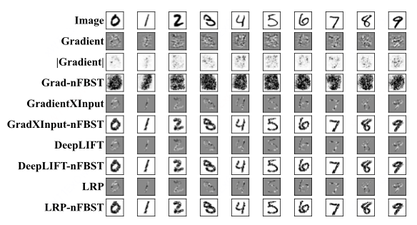

Significance testing aims to determine whether a proposition about the population distribution is the truth or not given observations. However, traditional significance testing often needs to derive the distribution of the testing statistic, failing to deal with complex nonlinear relationships. In this paper, we propose to conduct Full Bayesian Significance Testing for neural networks, called nFBST, to overcome the limitation in relationship characterization of traditional approaches. A Bayesian neural network is utilized to fit the nonlinear and multi-dimensional relationships with small errors and avoid hard theoretical derivation by computing the evidence value. Besides, nFBST can test not only global significance but also local and instance-wise significance, which previous testing methods don’t focus on. Moreover, nFBST is a general framework that can be extended based on the measures selected, such as Grad-nFBST, LRP-nFBST, DeepLIFT-nFBST, LIME-nFBST. A range of experiments on both simulated and real data are conducted to show the advantages of our method.

The code for this paper is released in GitHub

@inproceedings{liu2024full,

title={Full Bayesian Significance Testing for Neural Networks},

author={Liu, Zehua and Li, Zimeng and Wang, Jingyuan and He, Yue},

booktitle={Proceedings of the Thirty-Eighth AAAI Conference on Artificial Intelligence},

year={2024}

}